Read next

Machine Learning

Formal Verification of Neural Networks with Quantum Computers

Neural networks are not robust. The development of reliable predictors requires systematic methods to both assess their quality and to gain confidence in their correctness. Quantum computing can help here.

© iStock/elenaleonova

Neural networks can be unreliable for some basic conditions, such as image scaling and brightness changes. They are also highly unreliable for domain-specific conditions, such as the placement of stickers on traffic signs. Therefore, to work reliably, the model must correctly classify the sample under different lighting conditions, camera angles, etc. that occur naturally in reality.

© Goodfellow, Shlens and Szegedy 2014

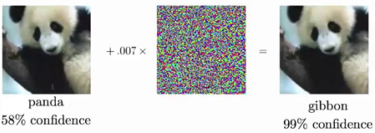

A small noisy layer is added onto the input sample to generate an adverse example that misleads the network classification. Ref: (Goodfellow, Shlens and Szegedy 2014)

This is not a new problem. Formal verification of neural networks has attracted significant interest from various communities, including machine learning, artificial intelligence, formal methods, and security. To gain a deeper understanding of the issue, consider a set of allowed perturbations, S, for each image x. This captures the manipulative power of the adversary. In image classification, S represents the perceptual similarity between images. For instance, S can be modeled as a convex shape around x. This allows us to formally specify environmental conditions and systematically test neural networks against them.

Neural networks and their certification

In this context, S represents the set of possible images that could be induced by the attack. It is also referred to as an adversarial region. It is important to note that it is practically impossible to list all the possible images in that region and simply run the network in each one to verify that it is correctly classified. Consequently, two methods exist for certifying the robustness of neural networks:

- Convex approximation

- Exact verification

The initial approach involves computing the convex shape S around the input x and propagating it throughout the entire network to obtain an output shape. The robustness of the method is then demonstrated if all concrete outputs within the output form are classified correctly.

Another approach is to formulate the ReLU (Rectified Linear Unit) with binary variables, indicating whether a particular element is active or inactive. This method allows us to represent the verification problem as a mixed-integer optimization program (MIP). For smaller networks, an input shape can be propagated with exact precision using MIP. To scale larger networks, classical approaches typically employ the first method and approximate the shape using various convex relaxations (e.g., intervals, zonotopes, and polytopes). While approximate analysis is typically faster and more scalable than exact analysis, it is not always effective in verifying robustness. Conversely, exact analysis is typically exponentially time-consuming and less scalable. However, it ensures the completeness of the result.

Where quantum machine learning comes into play…

The development of quantum computing (QC) hardware and software has made significant strides in recent years. However, the practical applications of QC remain an active area of research, as current quantum computers belong to the category of Noisy Intermediate Scale Quantum (NISQ) devices. These devices are distinguished by their relatively small number of qubits (approximately 100), noise, and limited connectivity between the qubits. Consequently, it is not currently possible to perform large and complex calculations solely on a quantum PC. Instead, current algorithms are hybrid in nature and require interaction between classical and quantum computers.

The potential of exploiting the exponentially large space of qubits has led to significant interest in combinatorial optimization across both gate-based QC and quantum annealing. As a result, we are the first to apply the combinatorial power of QC to verify the robustness of neural networks with a hybrid quantum-classical machine learning algorithm.

In our 2022 publication, we formalized the verification problem to align with the capabilities of quantum optimization algorithms. The problem is divided into two parts: the first part is broken down to fit the quadratic unconstrained binary optimization (QUBO) framework, which is used by current quantum heuristics, such as the quantum approximate optimization algorithm (QAOA). The second part is broken down to fit a linear program, which is computed with classical solvers. The approach alternates between classical AI machine learning and quantum systems. The QUBO is solved by a quantum computer or a quantum annealer, and the parameters are updated by an optimizer running on classical hardware. Given their heuristic nature, more iterations lead to better results. In our approach, we have reduced the number of iterations and imposed a limit on the maximum number of qubits required.

In summary, the challenges posed by current NISQ devices and quantum

heuristic algorithms are well-researched and being able to find suitable

applications and practical use cases is the key to unlocking the

quantum advantage. Given the combinatorial nature posed

by formal verification of neural networks, this challenge appears to

hold great promise for highlighting the quantum advantage.

References:

Franco, Nicola, Tom Wollschläger, Nicholas Gao, Jeanette Miriam Lorenz and Stephan Günnemann. 2022. “Quantum Robustness Verification: A Hybrid Quantum-Classical Neural Network Certification Algorithm.” arXiv preprint.

Goodfellow, Ian J, Jonathon Shlens and Christian Szegedy. 2014. “Explaining and Harnessing Adversarial Examples.” arXiv preprint arXiv:1412.6572.

Quellen:

Franco, Nicola, Tom Wollschläger, Nicholas Gao, Jeanette Miriam Lorenz, and Stephan Günnemann. 2022. “Quantum Robustness Verification: A Hybrid Quantum-Classical Neural Network Certification Algorithm.” arXiv preprint.

Goodfellow, Ian J, Jonathon Shlens and Christian Szegedy. 2014. “Explaining and Harnessing Adversarial Examples.” arXiv preprint arXiv:1412.6572.